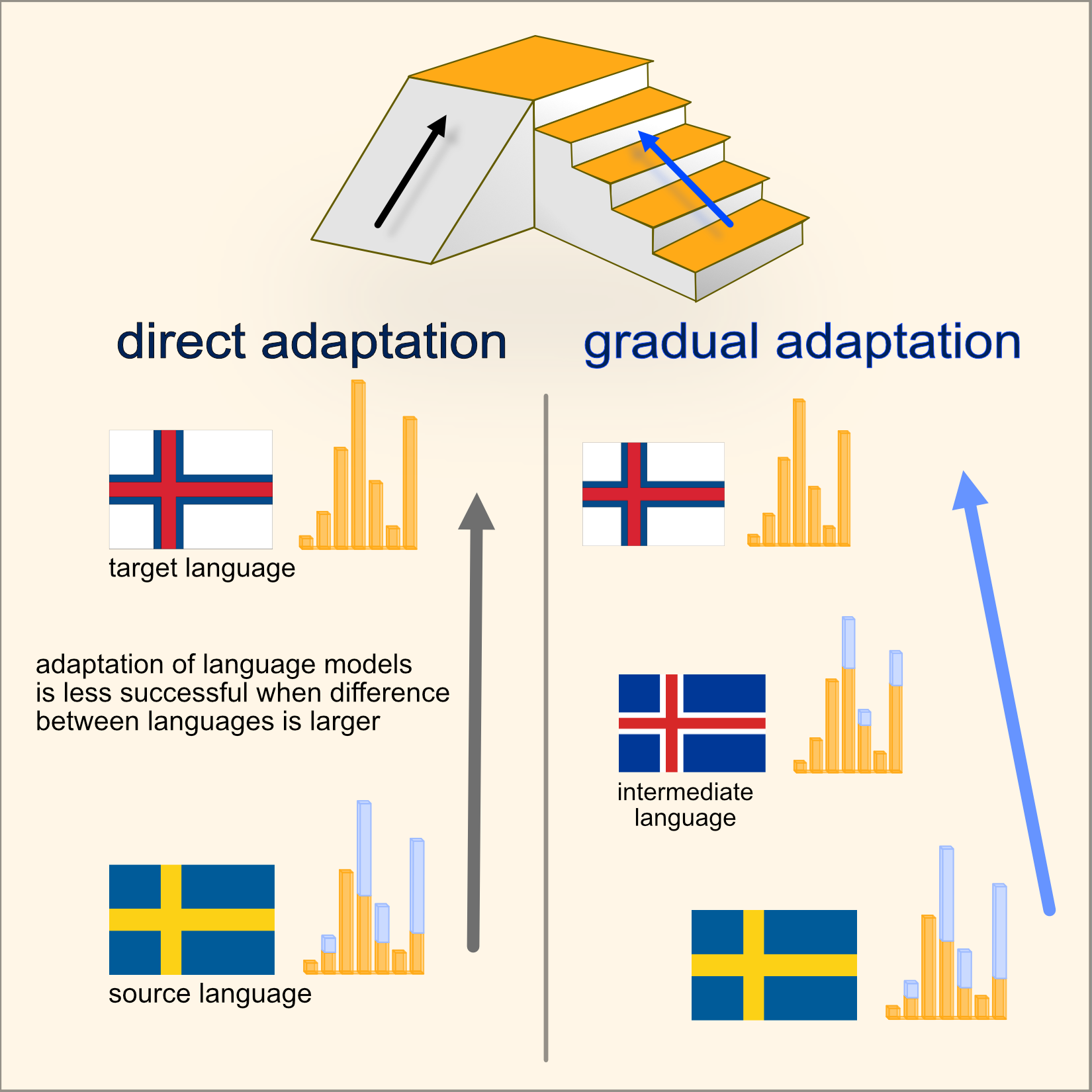

Gradual Language Model Adaptation Using Fine-Grained Typology

Extended abstract accepted at the SIGTYP workshop, presented at EACL 2023 in Dubrovnik.

My main motivation is to create better performing NLP tools for diverse languages with a special focus on data-poor settings. In researching data-efficient methods such as language adapters, I integrate expertise from my background in Linguistics (BA Linguistics, University of Cambridge), NLP and deep learning (MA Human Language Technology, Vrije Universiteit Amsterdam).

I started my PhD in September 2022 with Johannes Bjerva at Aalborg University in Copenhagen, Denmark. Already in my first year, my extended abstract Gradual Language Model Adaptation Using Fine-Grained Typology was accepted to SIGTYP at EACL 2023.

In the following years, I aim to build on my existing knowledge and develop my skills to fulfill my goals, especially with respect to Uralic languages that receive little attention in current NLP research.

Extended abstract accepted at the SIGTYP workshop, presented at EACL 2023 in Dubrovnik.

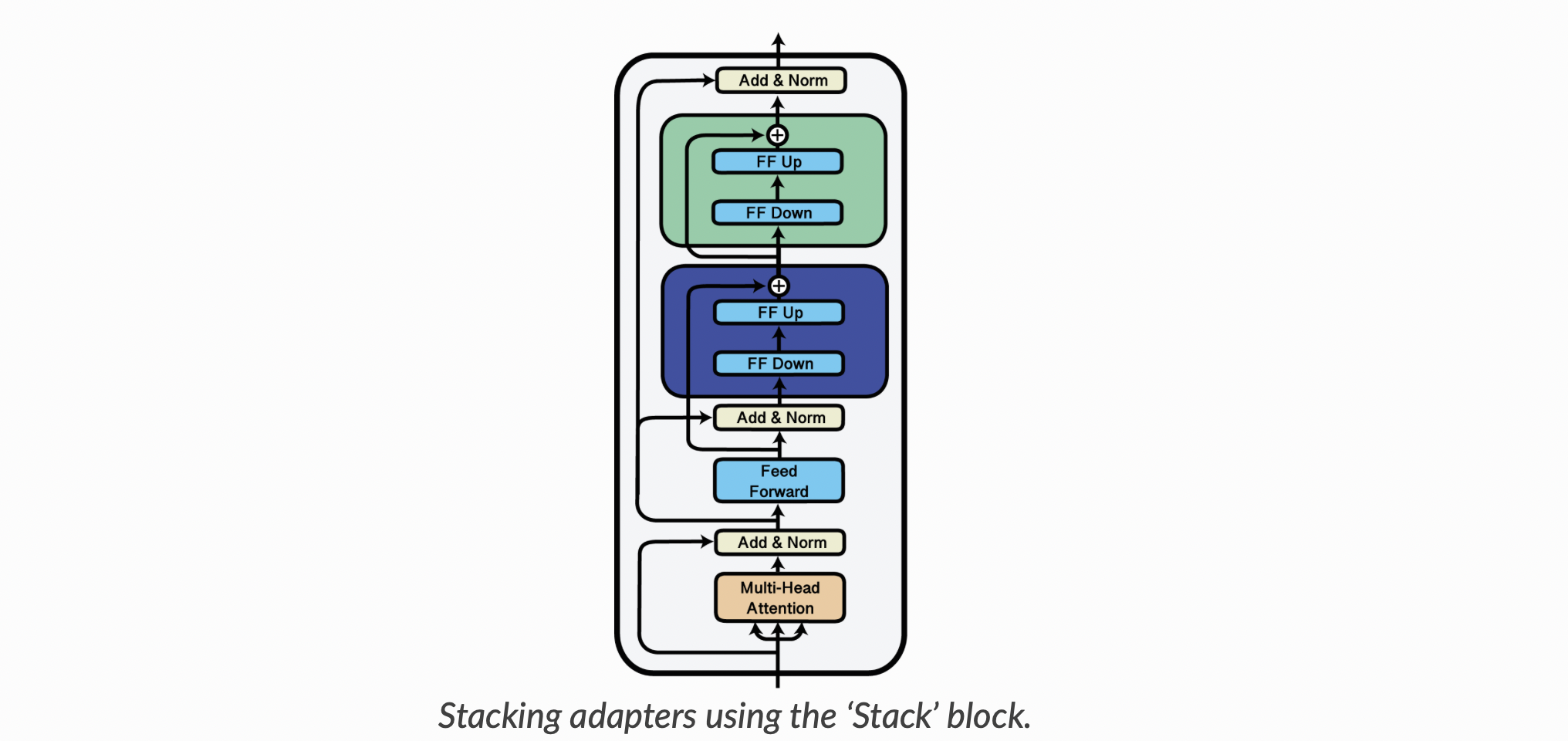

Thesis internship project with the Vrije Universiteit Amsterdam and TAUS, image credits to AdapterHub.

Abstract accepted for the submission of full chapter in the volume Multilingual DH. Collaboration with Lisa Beinborn and Johannes Bjerva.

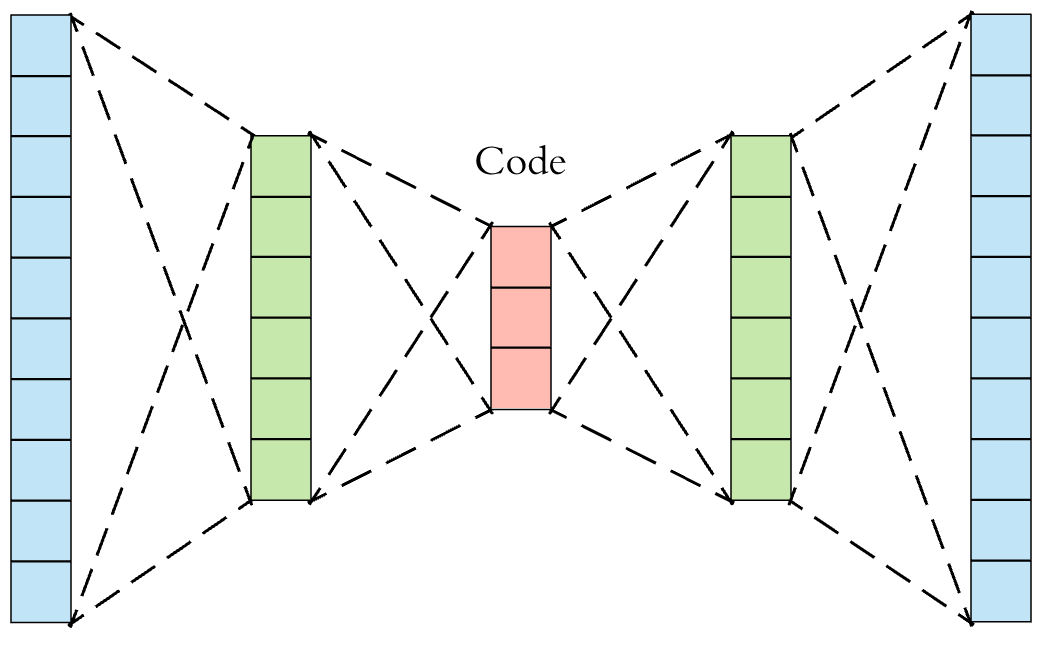

Internship project with TAUS, image credits to Arden Dertat on Towards Data Science.

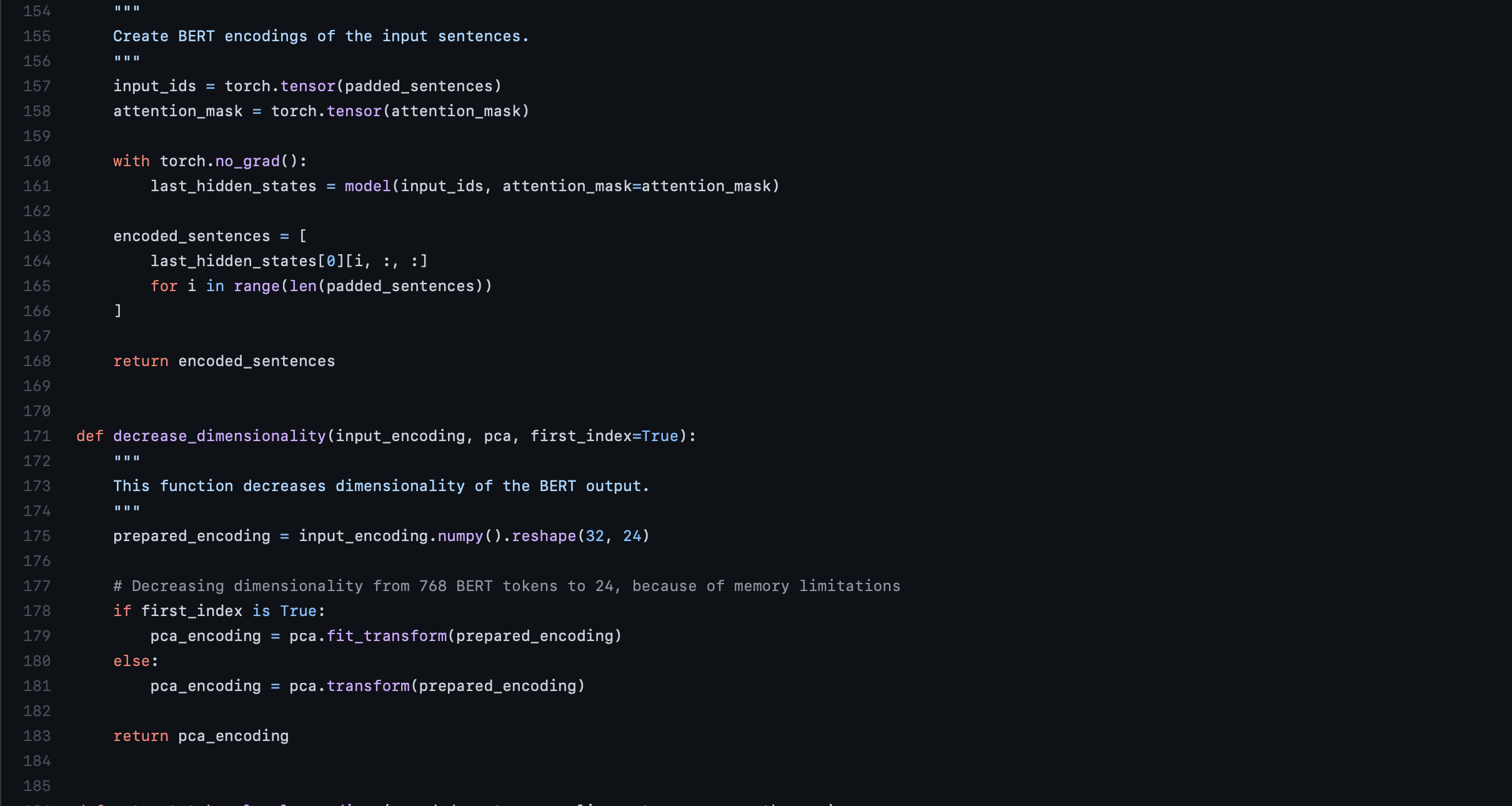

Cross-lingual linguistic probing using multilingual BERT, a project for LOT (Netherlands Research School of Linguistics).

Group project carried out in the Vrije Universiteit Amsterdam, using DistilBERT to carry out attribution linking.

Group project carried out at Vrije Universiteit Amsterdam as part of the larger project Chronicling Novelty, image credits to them.